Expressive Movement and Physical Model Animation

Improvements Required of the Captain Scarlet Television Series

Sequence Time 7:55-8:10

Lindsay

Grace

University of Illinois, Chicago

PhD Student

Overview and Abstract

Debuted

in 2005, the original animated series, Gerry Anderson’s New Captain Scarlet,

demonstrates the continued progress of computer-generated imagery (CGI) to

produce fantastic images of fictional worlds. The techniques employed by the

staff at Centurion are familiar, effective methods for animating worlds in the

low budgets and short production cycles of the television animation world. While upon first glance the CGI is

convincing, the attention to the natural physics of every day life are highly

lacking. As with the animation of the 1960’s

and 1970’s Anderson’s animation supplements broad-brush strokes for intimate

details that convince an audience to believe in the fiction he has

created. This is complimentary to

Anderson’s original Supermarionation effect, which, while technically interesting,

required suspension of disbelief to immerse the audience.

In

this paper I have chosen to evaluate a single scene that exemplifies the need

to allocate more resources toward the integration and animation of characters,

props, and models in composited scenes. This paper serves to illuminate the

successes and failure of two non-contiguous character animations sequences of

15 seconds length. The first is between 7:55 and 8:10, while the second,

located at 11:30 to 11:45 serves only to emphasize the demonstrated in the

first sequence. Both sequences are taken from Captain Scarlet Series 2, Episode

two entitled Duel. I posit that a few

technological and artistic production changes available in 2005 could have

greatly enhanced the quality of the production without significantly increasing

budget or time to production.

Introduction and Background

Why Critique

Character Animation?

American

and British audiences have historically emphasized the movements of characters

in their evaluation of quality animation.

However, the productions attention to character animation can be as

plastic as his Supermarionated[1]

puppets. Foreshortened by a shallow

script and overshadowed by the series’ traditionally fantastic vehicles, the

characters are the visual element that requires the most attention.

Unlike moving 20-ton

vehicles across the lunar surface, or shuttling a jet-fueled motorbike through

traffic, our experience with the movement of fabric, skin, and the human body

are part of our everyday experience. It is these aspects that the critical mind

recognizes most immediately as artificial.

It is these elements that require the most detail, the most physical

accuracy, and the attention of a cunning, creative artist. It is these details that inspire audiences

to fear for the characters that face danger in the action sequences or wish

death to the evil villains of a modern hero’s epic.

Unlike moving 20-ton

vehicles across the lunar surface, or shuttling a jet-fueled motorbike through

traffic, our experience with the movement of fabric, skin, and the human body

are part of our everyday experience. It is these aspects that the critical mind

recognizes most immediately as artificial.

It is these elements that require the most detail, the most physical

accuracy, and the attention of a cunning, creative artist. It is these details that inspire audiences

to fear for the characters that face danger in the action sequences or wish

death to the evil villains of a modern hero’s epic.

It

is no small effort to convince audiences of a reality. Where in the 1960’s

Anderson’s puppetry may have entertained young audiences, that same

demographics’ point of reference is a comprehensive library of video games,

computer enhanced live action film, and motion graphic embellished advertising[2][3]. Today’s audience even has experience playing

with low budget, simple to use character and environment animation technology

like Daz Studio and Bryce. The notion

of engaging the visual appetite of an audience through lightly veiled rigging

has been tossed aside like an old toy.

As audiences become better acquainted with the magic tricks of simulated

reality, they become more critical of them[4]. Audiences need more accuracy to real world

physics if they are to believe the world an animator has constructed.

Ironically,

the Ultramarionation technique is subject to many of the same limitations as

the Supermarionation puppetry technique of Anderson’s past. Like the puppets of

the original animation, characters lack subtle body expression, realistic hair

and skin, and convincing interaction with objects. Beyond these shared challenges,

CGI introduces palpable problems with physical movement distinct to a

computer-generated world.

Analysis: Subtle Movement of Realistic Anatomy

Do You Know Body

Language?

The

Captain Scarlet series was animated using a combination of motion capture and

key frame animation[5]. If executed

correctly motion capture encourages physical accuracy by providing a real world

reference for the animation process. When a motion capture actor has

appropriately executed their movements the animation should have the accurate

joint offsets, join displacement and movement hierarchy. While it is the animator’s responsibility to

refine the content of a motion capture scene, the reference data should provide

adequate information for a realistic set of movements. Careful analysis of the

scene indicates that the character movements are not accurate.

To

their praise, the animators have done an excellent job of preserving some of

the constant imperfect movements of the motion capture actors as they

speak. Character 1 female[1],

for example, moves her arm as she speaks and sways slightly. These subtle

visual cues confirm the audience’s expectations of a realistic character.

People move when they speak.

People

also breathe, sigh and emote when they speak.

As a typical artifact of conventional motion captures systems, the

subtle movements of characters are absent.

This is because most motion capture projects use motion capture to

capture the focal visual cues[6].

In an action sequence, these cues are typically character or prop movements

such as firing a weapon or mounting a motorcycle. Motion capture may also be used to capture specific facial

movements. In both of these motions capture activities; the focus is on the

pantomimed, sometimes exaggerated movements that are easily discerned from a

distance. However, in a conventional

motion capture animation pipeline animators insert nuanced and embellished

character movements to enhance the captured data.

As

with most TV series, budgets and time to production were short for this series

(insert reference). If the series had

the resources of a major feature length film they could have hired the

additional animators and developers to use and implement custom controls to

accentuate movement of the chest and neck.

However, modern production systems also offer predefined character model

movements that provide some of the most traditional visual cues. XSI’s Softimage for example, provides a

character animator suite of tools that maps routine chest movements associated

with breathing to an anatomically correct model[7]. The same enhancements are available through

Alias and other commercial manufacturers.

At

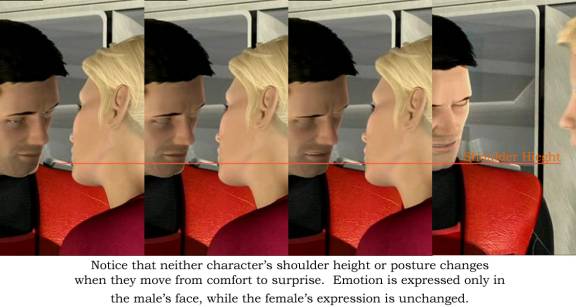

8:01, the short falling of whole body expression is best indicated. While male character 1 demonstrates

disappointment by the bend of his brow and the lowering of his head, his

shoulders and posture remain unchanged.

This sequence lacks the characteristic lowering of the shoulders and or

stiffening of posture to indicate anger. This also would have been an excellent

opportunity for a character animator to insert a sigh of frustration.

Why are you Acting

Like a 5th Grader?

Real

bodies communicate as they speak. The

characters in Captain Scarlet lack the cooperative efforts of the upper body to

emphasize what a character speaks. While much effort is spent moving eyes,

eyebrows, and mouths, little is spent articulating expression through the whole

body. Live action actors who provide

performances equal to these virtual actors would be harassed for not being in

their bodies. The performances are

again 1 dimensional, relying largely on the first order of communication, but

ignoring secondary communication in the hands, shoulders, and posture. These characters are essentially rigid bodies

from the clavicle to the sacrum. Some might say, their movements are like

middle schoolers on the school stage – rigid, pantomimed, an unemotional.

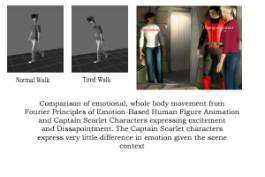

If

you compare the expressions in the figure to the left with the Captain Scarlet

model, the difference in posture is probably the most indicative physical

element. While it may be true that some of this posturing is effected by the

motion capture process itself, the animators have license to produce these

changes through key frames. If for example, a character must express

disappointment, the animator can key a movement from an upright posture, to a

slouch while keying the facial expressions.

Several

researchers have worked toward trying to indicate emotion in full character

motion. Chi et al even provide a

systematic approach to called the EMOTE model that was used to render real time

animations effected by effort and shape parameters. Their research does an excellent job emphasizing the “important

role torso plays in gesture and in the depiction of a convincing character”[8]

If Only We Could

Breath

The

pace of a characters breathing communicates the characters emotional state.

Although there are several moments where chest movements are inserted, most of

them do not punctuate the characters emotional state. In the aforementioned scene, for example, a sigh from the

romantic couple would punctuate their frustration with subtle, whole body

communication.

One

approach to enhancing the accuracy of the production teams standard 50-60

sensor animation techniques[9]

is to shoot the same scene with repositioned sensors based on camera

distance. Instead of recording the

movements of the entire body, a second motion capture session could be

conducted with the same number of sensors mapped to the upper half of the

body. Since the disparity between real

world movement and animation movement is more apparent in close to mid shots,

there is need for higher fidelity motion capture at this camera distance. The

playback of a scene could be made more accurate by using more sensors over a

smaller surface area. This simple

change requires no new software, only a change in scheduling and management of

the animation pipeline. The concept is to refocus the important visual cues

based on the camera’s distance, as animators already do. In a close up, for

example, there is information about the location of the character’s knees, yet

when the scene is rendered that information will be discarded. It would be

better use of computing resources to record only the items specified by the

shot plan.

Analysis: World Physics and Implied Weight

Why are we Always

Walking on the Moon?

The

characters move with a weightlessness characteristic of computer-generated

animation. The pace and cadence of

their movements, whether in a high speed helicopter, or a city street remains

the same. It is a smooth and tranquil movement that rides along the gentle

rails of mathematical expressions. As with much CGI, the effort to correct

the imperfections of translating the analogue world of everyday experience

through the clean lines of the digital world results in a watery netherworld of

geometrically, trigonometrically correct translations and rotations. Like something from a Science Fiction film,

the characters simply lose their life to the formulas that govern them.

Don’t

Hurry; the Math Will Get Us There

In

the selected scene, the characters move at the same cadence. Whether it is to

move toward a much desired kiss or to exclaim a clever quip, the characters

movements are managed by formulas that calculate rates of movement and fit them

into an ideal frames per second. The result is an artificially fluid movement;

characters don’t shake, they have no weight to their own bodies, and lack

momentum. In the selected scene, when

two characters are surprised by a third, the inertia of swift change is drowned

by the computing of perfect curves meant to bring the arms back down to an idle

position. In the natural world surprise is communicated through the whole body

and by quick, imperfect motions - a hand opens wider than is comfortable, the

abdomen tenses, the body prepares to react. However in this scene, hands drop

gracefully, and the upper body wavers as it always does.

To

correct for this problem animators should have embellished character movements

more finely. In this case, much of the

motion capture data seems accurate, if not overly smoothed. Assuming that the

motion capture actors performed well, the performances could benefit

substantially from the hand of a skilled animator.

Although

released the same year production of the Captain Scarlet series would have

started, the work of Pullen and Bregler[10]

on Motion Capture Assisted Animation would have helped to provide more natural

movements. There method of texturizing

mocap data speaks to the need for animators to blend motion provided through

observation with an artist’s touch.

Why

Are We Always Walking on the Moon? Where did all the Mass Go?

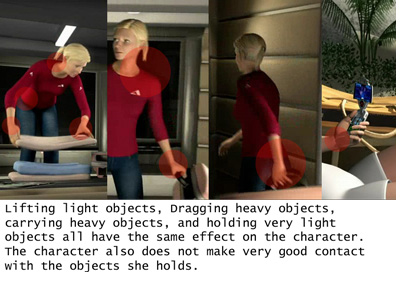

Through the entire

animation, there is an apparent lack of physical weight. Not only do the

characters seem to lack weight in their own bodies, but the props with which

they interact all carry the same weight. Whether it is a large machine gun, a

pistol or a heavy suitcase, the item characters carry, push or pull all produce

minimal to no strain on the character.

Through the entire

animation, there is an apparent lack of physical weight. Not only do the

characters seem to lack weight in their own bodies, but the props with which

they interact all carry the same weight. Whether it is a large machine gun, a

pistol or a heavy suitcase, the item characters carry, push or pull all produce

minimal to no strain on the character.

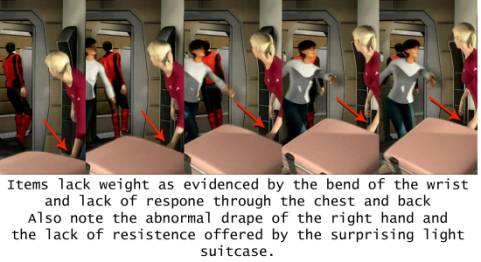

As

the scene ends and the characters carry their recently packed suitcases, their

wrists do not bend under the strain. The characters extend their arms instead

of bringing the baggage close to their bodies. When the suitcase is picked up,

the arm does not give as the muscle tenses to collect it.

Since

it is impractical to suggest collision based rendering, or physics engine for

such simple needs, the production team should rely once again on the natural

hand of an animator. Even without the opportunity to model underlying bones and

muscles, the key frame can be tweened to imply resistance to objects. Animators

can for example provide a delay between collecting an object and moving an

object. They can bring an arm down when items like a phone are collected,

instead of providing a straight, unaffected path to from the original objects

location to it’s goal location.

Programmatically

the applications used to animate could be enhanced by adding virtual gravity as

executed in many video games. A constant force could effect character movements

down on the Y-axis. The simple code is

computational inexpensive, and might encourage animators to manage movements

more carefully, as arms would descend and items would fall when pulled from

bed.

Analysis: Movement of Hair, Skin, and Clothing

Why Isn’t My Hair

Moving

The

modeling and animation of hair is an ongoing challenge for many animation

teams. The reasons realistic hair and

skin are so important to an audience is that they provide situational cues. If

it is windy, hair blows. If it cold, the skin is ruddy. If it is hot, we sweat.

If we are nervous, tired or excited the dermal layers of the human body

indicate it. As with many fast

animation techniques, the characters in this series are made plastic through

the simplicity of their skin and hair.

These characters have arguably more in common with _______ toys than

with human beings.

The

research of Ming et al.[11]

and Guang [12]indicate

some cost effective solutions for the accurate modeling of hair. Some hair

systems are breeds of particle systems, which were sorely needed in scenes

outside this discussion.

The

most problematic movement of hair is demonstrated in female character 1’s

ponytail. It moves as though it were a

single rigid body whose base is mounted on a pivot. It swings, but the hair

does not separate. It is more subtlety

a problem for female character 2, as her hair is an actual rigid body that does

not move. Short term, low budget solutions

for this problem in interactive and cinematic animation include remodeling the

character with a helmet or other items that prevents audiences from seeing the

hair at all.

My One Complexion

is Perfect

Skin

is multiple layers. While the average person does not notice these individual

layers when speaking to another person, the way each of those layers interacts

with the environment is apparent to them.

When a person turns red, it is clear the outer layers of the skin are

not turning red, but that something behind the skin is turning read. When a

person is worried, it is clear that their skin is not changing, but it being

changed by something behind it. The

characters in this series lack that fine differentiation. Real skin deforms

based on the interaction of more than one layer.

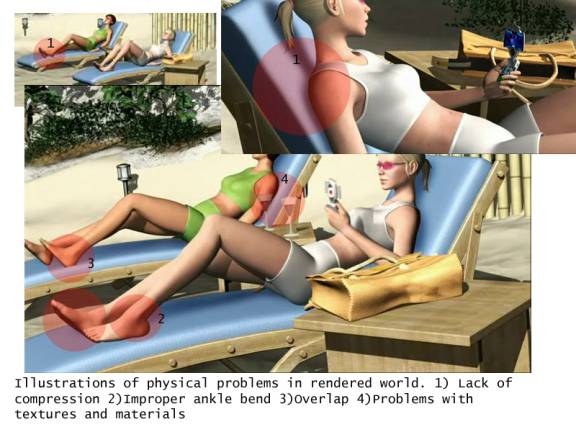

Save

for the anonymous antagonist at the start of the episode, Captain scarlet

characters are one dimensional in this respect. They lack an inherent or

implied sub-dermal layer comprised of more skin, muscle and skeleton. This lack of a multi-layer model is so

prevalent that at times the characters actually bend in artificial, elastic

ways. Careful study indicates an

abnormal curvature of the ankle while the women are sitting in the lounge

chairs. It seems as though the joint have more range of motion than the average

person does. Oppositely, there are no deformations of the skin when the women

rise from the chairs. Visually it is implied that the skin is neither tight,

nor loose. Instead, the hollow, thin layer surrounds a loosely articulated

puppet. In short, its programmatically modeled structure is as apparent as the

Anderson’s original models.

If

time permitted and budget permitted, the production team could benefit from the

work of Park[13] and James[14].

Multilayered skeletal models and accurate skinning would help the production,

but their computational and budget expenses probably exceed this production’s

limits. Careful examination problems in toes that don’t curl when the women

move for example.

Skeletal

modeling systems also would not solve the problem of the painted surfaces of

the characters’ clothes and eyebrows. While, for example, the women’s sun

bathing outfits imply clothing by the shape of the geometry, they do not deform

adequately. Even the tightest Lycra

bathing suit deforms differently than the body under it. It suggests that these objects do not have

the proper geometry to bend and twist with their own physics. For cloth

modeling I would suggest a review of the Baraff and Witkin. Siggraph paper on

Large Steps in Cloth Simulation[15].

Although nearly 8 years old, the papers approach is computationally efficient

which makes it appropriate for this level of animation.

The

animators of the popular Shrek feature film of 2001 employed an approach that

might have solved the problem of artificially textured skin[16].

Using the fundamentals of oil painting, they combined multiple textural layers

to emulate the skin. An outer layer for example, would be a largely opaque,

slightly reflective texture that provides the sense of oils on the skin. The second layer demonstrates substance with

color that is more diffuse and lower secular and emissive values. The third layer would employ deeper color,

and can be manipulated dynamically to emphasize states like anger (more red) or

fear (more white). This technique

could theoretically help add realism by giving lighting calculations more

diverse normals from which to calculate conditions. A shirt for example might

have a shiny base, but a rough layer of warn fibers that filter the refracted

light.

Same Old Problems: New World

Deadlines: The

Biggest Enemy of All

Just as traditional

animators agreed to short fallings in the final product to meet deadlines,

existing CGI animators produce, and publish their mistakes in the name of

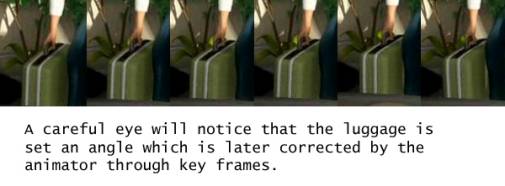

deadlines. The most egregious is the

sliding that occurs in this scene.

Likely a remnant of improper initial blocking, a critical eye will

notice that a suitcase slides across the floor after it is placed. In

compliment, the male character also slides.

It would normally be conceivable that this is the result of field of

view change. However, reference objects in the scene, such as a table or other

characters indicate that this is not the case. It is more likely that these

items were moved to prevent them from being occluded, keep them from being

clipped, or prevent them from overlapping when composited.

Just as traditional

animators agreed to short fallings in the final product to meet deadlines,

existing CGI animators produce, and publish their mistakes in the name of

deadlines. The most egregious is the

sliding that occurs in this scene.

Likely a remnant of improper initial blocking, a critical eye will

notice that a suitcase slides across the floor after it is placed. In

compliment, the male character also slides.

It would normally be conceivable that this is the result of field of

view change. However, reference objects in the scene, such as a table or other

characters indicate that this is not the case. It is more likely that these

items were moved to prevent them from being occluded, keep them from being

clipped, or prevent them from overlapping when composited.

To

alleviate this problem, the simplest solution is to move the camera toward the

scene so that this sliding occurs off camera. The production team likely

attempted this, as the camera shifts, somewhat artificially to a near canted

angle of the intruding female character 2.

If

that is not reasonable to move the camera, then it might be reasonable to

obscure the sliding element with some scene element in the foreground. Both of these techniques have been used in

cell animation when productions are tight (show example if you can find one).

The solutions are reasonable when there not enough time to re-render the entire

scene.

Issues

I ran out of Space to Discuss:

The

following are a few bullet pointed items worth mentioning, but the 2500 page

limit prevents me from discussion in depth:

- Shadows change:

multiple light sources seem to create varying size shadows that sometimes

project in the wrong direction.

- Shadow placement wrong

on phone and leg (11:46) – shadow on leg not on thigh.

- Eyes don’t move behind

the eyelids

- No muscle flex when she

moves herself up the chair (11:46)

- 2nd girl is

going through her mat (11:46)

- Mat does not deform

(11:46)

- 11:39 re-rendered as

different scene and later edited in – different light source, allows them

to render more polygons in less time (tight shot), but shadows displaced

to right instead of left

- 8:02 – Paul slides

away, as the background is locked (same as suitcase)

Conclusion

While

the Captain Scarlet series does an excellent job of demonstrating the vast

improvements the art of animation has accomplished, it is clear that many of

the old challenges continue to exist.

Similar to the Supermarionated puppets of Anderson’s past, the new

Captain Scarlet CGI lacks accurate human movement and convincing world physics.

These short fallings are best illustrated by the analysis of hair, skin,

clothing, total character movements, and the interaction of prop and

character.